From PoCs to production: the architectural requirements of AI

In recent years, companies have started experimenting with artificial intelligence within applications and platforms through proof of concepts and isolated prototypes. The transition to production, however, often proves more complex than expected, as critical issues emerge that must be addressed before reaching an operational release.

These critical issues include security in handling data processed by AI, economic sustainability in the medium and long term, and more generally, the ability to design an infrastructure capable of evolving over time, suitable for integrating different models and supporting high workloads. In Europe, these aspects are intertwined with requirements of jurisdiction, data localization, and regulatory compliance, requiring informed architectural choices from the earliest design stages.

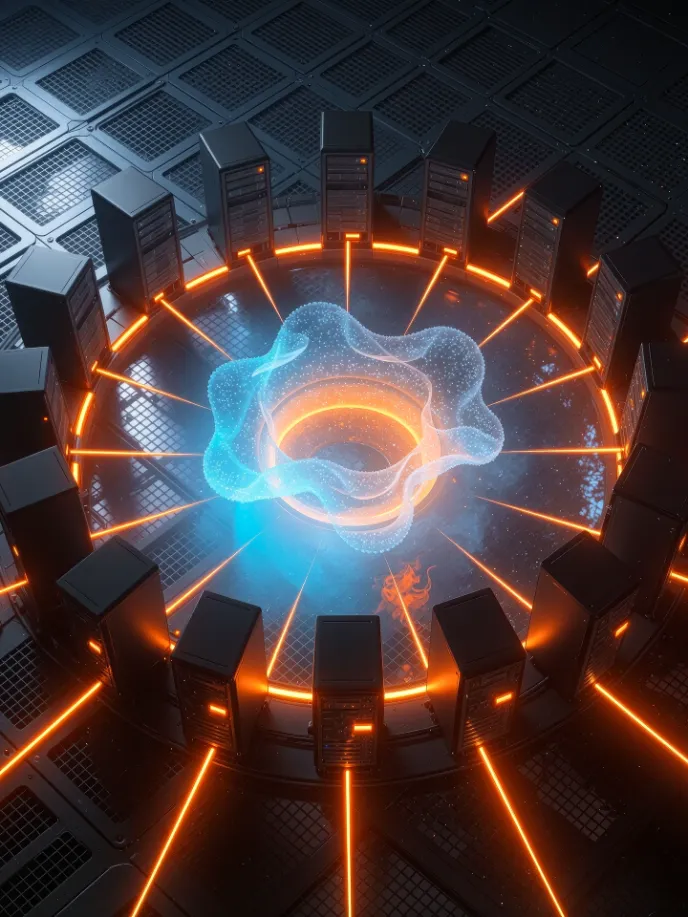

High-performance compute

Scalable resources for training and inference of AI models, with consistent performance and control over processing.

Multi-model integration

A programmable layer through APIs to integrate AI models into applications and automate business processes.

Governance and compliance

Structured management of data and models, in line with regulatory requirements and security standards.

Control over costs and technologies

Transparent pricing models to plan spending and maintain flexibility to evolve over time.